Platform Tips #31: How To Use LLM to Enhance Developer Efficiency

This is how to consider LLM to enhance developer efficiency in your organization.

Hey Folks 👋,

I'm Romaric, CEO of Qovery, and this is my 31st Platform Tips post.

Since we’re currently exploring how LLMs (Large Language Models) can help us push the Developer Experience of Qovery to the next level, I wanted to share some tips on how you could also consider LLMs to help your developers always be more efficient.

Where LLM Can Help

LLMs are emerging as powerful tools that can used in many areas. Here, we explore three key areas where LLMs can substantially impact developer efficiency:

Increasing deployment success rates

Reducing deployment times

Uncovering unattended areas of improvement.

Increasing Deployment Success Rates

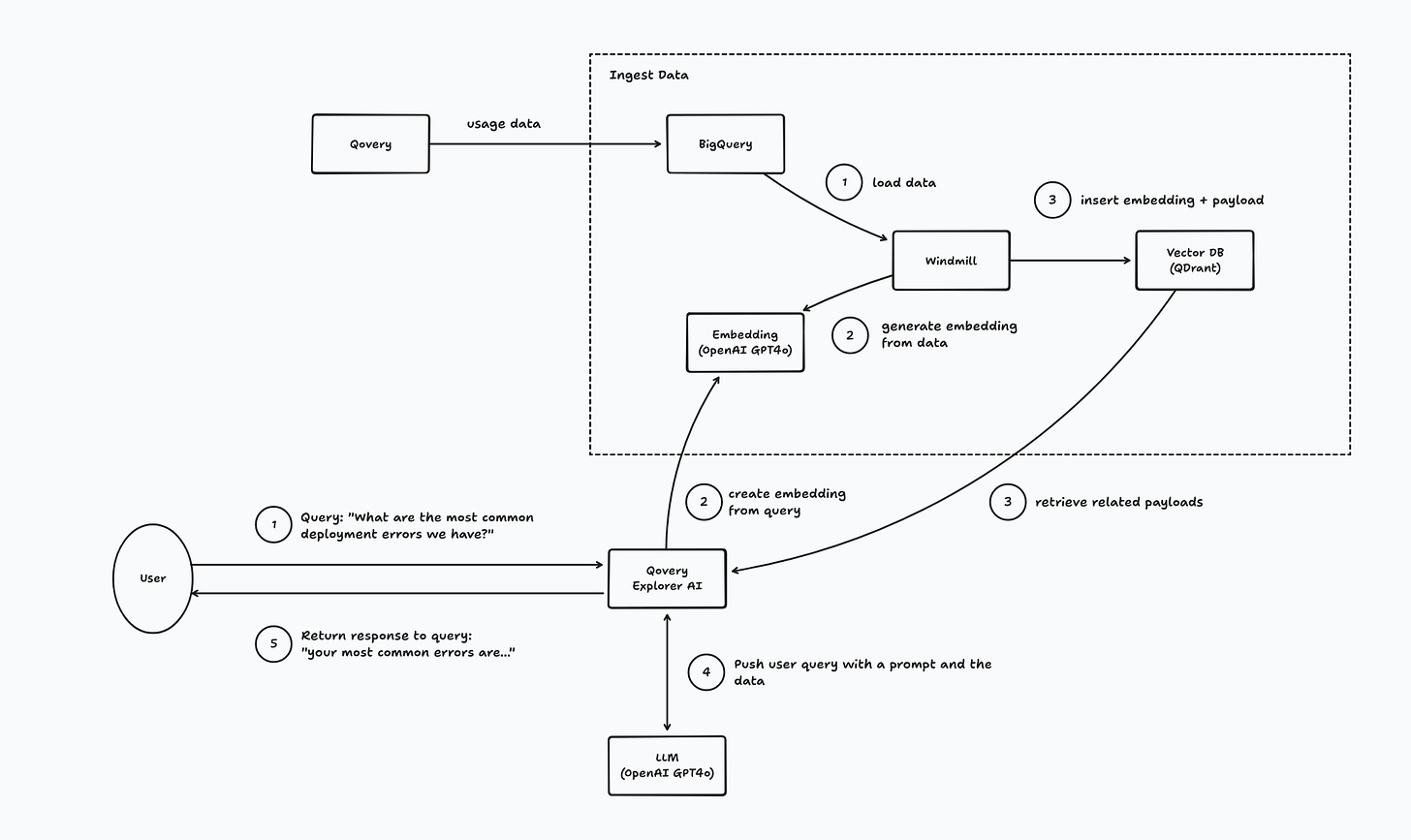

Deployment failures are costly and time-consuming. By ingesting deployment data, including log messages and timestamps, LLMs can analyze and summarize potential areas for improvement. These models excel at identifying patterns and anomalies within vast datasets, providing actionable insights that might not be immediately apparent to human engineers.

For instance, LLMs can highlight frequent failure points in the deployment process, suggest configurations that often lead to success, and recommend best practices tailored to the specific environment. This proactive analysis helps preempt issues, increasing the overall deployment success rate.

Reducing Deployment Time

Efficiency in build and deployment processes is key for rapid iteration and development. Identifying bottlenecks and optimizing processes can be challenging due to the complexity and volume of data involved. LLMs can act as supercharged data analysts, examining build logs, deployment scripts, and performance metrics to pinpoint areas that cause delays.

By running thorough analyses, LLMs can propose targeted improvements, such as optimizing build scripts, parallelizing tasks, or streamlining dependencies. Moreover, these models can estimate the impact of proposed changes, enabling platform engineers to prioritize efforts that will yield the most significant time savings.

Exploring Unattended Areas of Improvement

LLMs thrive on large datasets, making them ideal for uncovering hidden opportunities for optimization. Unlike human analysis, which can be limited by time and cognitive capacity, LLMs can process and analyze vast amounts of data quickly and comprehensively.

By feeding LLMs extensive deployment and operational data, platform engineers can receive insights into areas that might otherwise go unnoticed. This could include identifying seldom-used but resource-intensive processes, suggesting alternative frameworks or tools, or even predicting future issues based on historical trends.

Conclusion

LLMs are transforming the way platform engineers approach their tasks, offering new avenues for improving developer efficiency. From enhancing deployment success rates and reducing deployment times to uncovering overlooked improvement areas, LLMs provide valuable assistance in optimizing the software development lifecycle.

These are just a few examples of how LLMs can be utilized. The potential applications are vast, and engaging with the community to explore further possibilities could lead to even more innovative solutions. As LLMs continue to evolve, their integration into platform engineering will likely become increasingly sophisticated, driving further advancements in the field. Sharing experiences and ideas within the community can help us collectively harness the full potential of these powerful tools.

—

Let's revolutionize Platform Engineering by putting developers first. Subscribe now to join me on this exciting journey!